Now, it’s time to try to give to the yield body some dignity. Let’s add it to the Result type:ĭef foreach(f: A => Unit): Unit = f(result) So, the Scala compiler desugars the above construct to a call to the foreach method. The compiler warns us that we cannot use the variable res in the for-comprehension: Value foreach is not a member of .ForComprehension.Result. Let’s create a Result class that is a wrapper around an expression result: case class Result(result: A)įirst things first, we’ll try to print to standard output the value of a Result using a for-comprehension: val result: Result = Result(42) Let’s see an example.įirst of all, let’s define a class to work on. We can use for-comprehension syntax on every type that defines such methods. In Scala, the for-comprehension is nothing more than syntactic sugar to a sequence of calls to one or more of the methods: In the previous example, we saw how the semantics of a for -comprehension are equal to that of a sequence of operations on streams or sequences. So, we can mix a generator of type List with a generator of type List. In our previous example, both were instances of List. The values contained in the numberOfAssertsWithExecutionTime list are: ListĪll the generators inside a for-comprehension must share the same type they loop over. } yield ((id, result.totalAsserts, time)) Val numberOfAssertsWithExecutionTime: List =

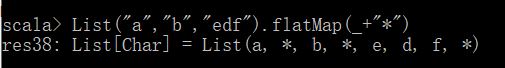

Let’s go ahead and look at some examples to help understand the difference between map() and flatMap(). Then, we merge the two elements, listing the total number of asserts executed for each test result, along with the execution time: val executionTimeList = List(("test 1", 100), ("test 2", 230)) Spark’s map() and flatMap() functions are modeled off their equivalents in the Scala programming language, so what we’ll learn in this article can be applied to those too. In the example, we loop over the list of results and the list of execution times. They loop independently from each other, producing all the possible combinations of their variables. We can have as many generators as we want. It introduces a new variable, result, that loops over each value of the variable results. Scala> val data2 = data.flatMap(line => line.split(” “))ĭata2: .The statement result <- results represent a generator. Scala> val data1 = data.map(line => line.split(” “))ĭata1: .RDD] = MapPartitionsRDD at map at :26 Scala> val data = sc.textFile(“/home/pranshu/pk.txt”)ġ7/05/17 07:08:20 WARN SizeEstimator: Failed to check whether UseCompressedOops is set assuming yesĭata: .RDD = /home/pranshu/pk.txt MapPartitionsRDD at textFile at :24 We can also observe here as data2 RDD is a flattened output of data1 RDD If we observe the below example data1 RDD which is the output of Map operation has same no of element as of data RDD,īut data2 RDD does not have the same number of elements. We can also say as flatMap transforms an RDD of length N into a collection of N collection, then flattens into a single RDD of results.

But for FlatMap operation output RDD can be of different length based on business logic If we perform Map operation on an RDD of length N, output RDD will also be of length N. It is similar to Map operation, but Map produces one to one output. It takes one element from an RDD and can produce 0, 1 or many outputs based on business logic. FlatMap is a transformation operation in Apache Sparkto create an RDD from existing RDD.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed